Knowledge Graph Visualization

Natural Language Processing

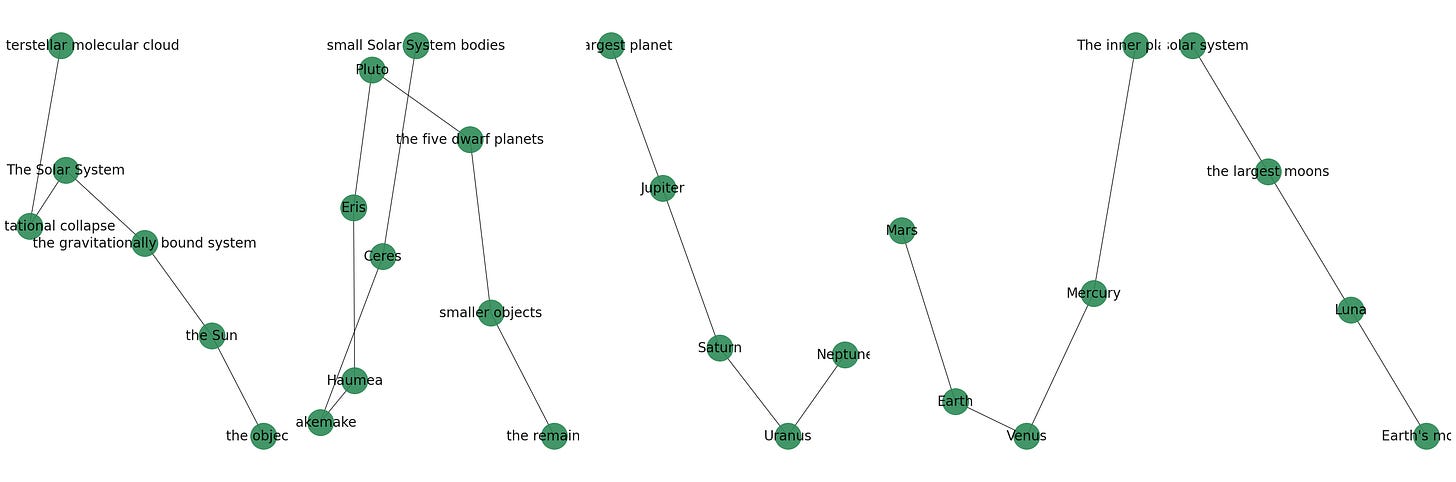

In the Understanding Latent Dirichlet Allocation post, we went through summarizing a chunk of text by organizing words through an importance score. In this post, we are going to take a step further by organizing concepts in a section of text by way of a relationship map, much like the one below on the solar system from the text below:

In order to generate the above relationship map, information extraction is a crucial step. This involves processing text data to extract entities, relationships, and attributes that exist between entities. The entities could be people, organizations, locations, dates, among others. The relationships could be familial connections, organizational associations, geographic locations or any connection that is relevant to the context of the text.

The library used in the above example is Spacy, which is a natural processing processing library in Python. It provides functionalities for tokenization, part-of-speech tagging, named entity recognition, dependency parsing, and more.

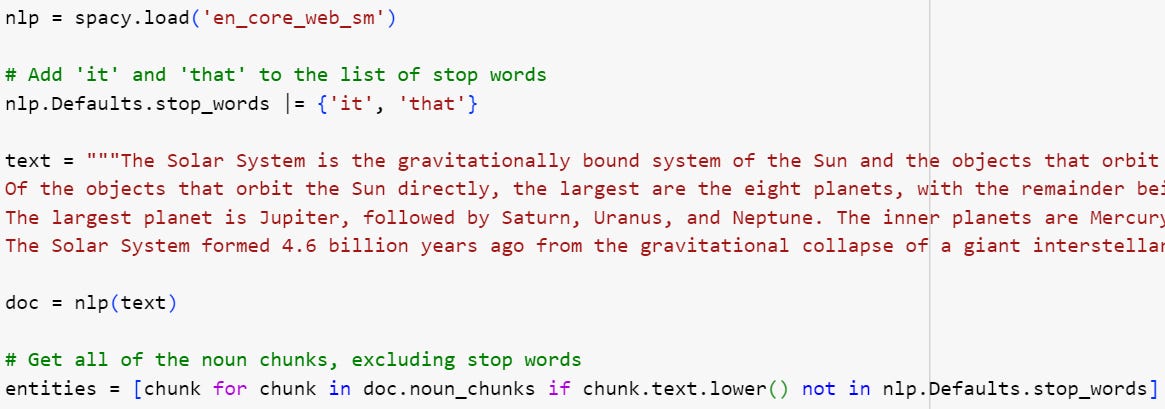

Below is code chunk used for extracting noun chunks from our text chunk above, treating them as primary entities:

Once the entities are extracted, the NetworkX library is used for network analysis, to construct the knowledge graph.

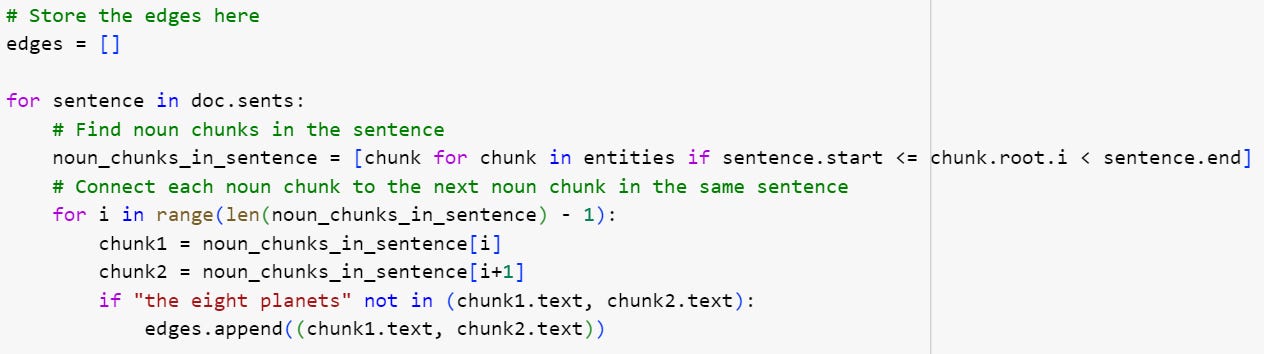

We then form edges between entities based on their co-occurrence in the same sentence.

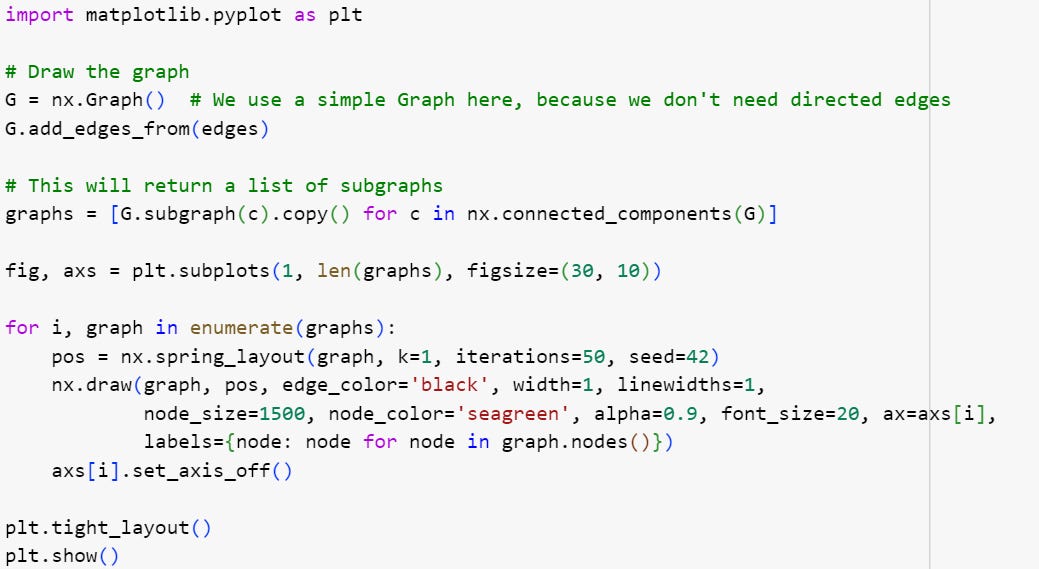

In the final step, we plot the knowledge graph using matplotlib, the commonly used visualization library in Python:

By running this code, we would be able to visualize the entities in our text and their relationships, providing a structured and accessible view of the information in the text.

Further modifications could be made to the code to introduce entity disambiguation, where the code could differentiate between the same word used in different contexts, such as Paris used to mean the city, or a person’s name.

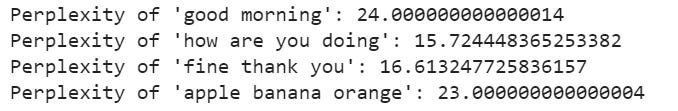

Machine learning models could also be used to perform more context-aware extraction tasks. Below is an example using perplexity scores to detect phrases that are different from what the model expects based on its training data beyond a threshold.

AIQ by Nick Polson and James Scott

Data scientists have a favourite saying: all models are wrong, but some models are useful. In other words, no model can describe the real world perfectly, but sometimes the mismatch is important, and sometimes it isn’t. The corollary is that judging whether a model is useful requires knowing both the model and how that model will be used.

The larger point here is that building models is job fit only for people. A machine can make predictions based on the assumptions with which it’s programmed, but only people can check those assumptions. Good data science requires people and machines working together, because the difference between the model and the reality isn’t always such a casual matter.

CodeChat

CodeChat will continue this month and to be held on July 28 at 5pm EST. The topic is going to be on natural language processing and their uses, joiners’ perspectives, as well as any questions you may have related to coding. This is a great monthly opportunity to connect with other tech enthusiasts and industry professionals. You may sign up here for a meeting reminder or the meeting link is here if you would like to join directly.

Feedback

The Substackers’ message board is a place where you can share your coding journey with me, so that we can exchange ideas and become better together.

Please open the message board and share with me your thoughts!