While the introduction of AI technologies in recent years has certainly brought convenience to our lives, yet it has added an extra layer of complexity in detecting content that has been artificially generated and are subject to hallucinations.

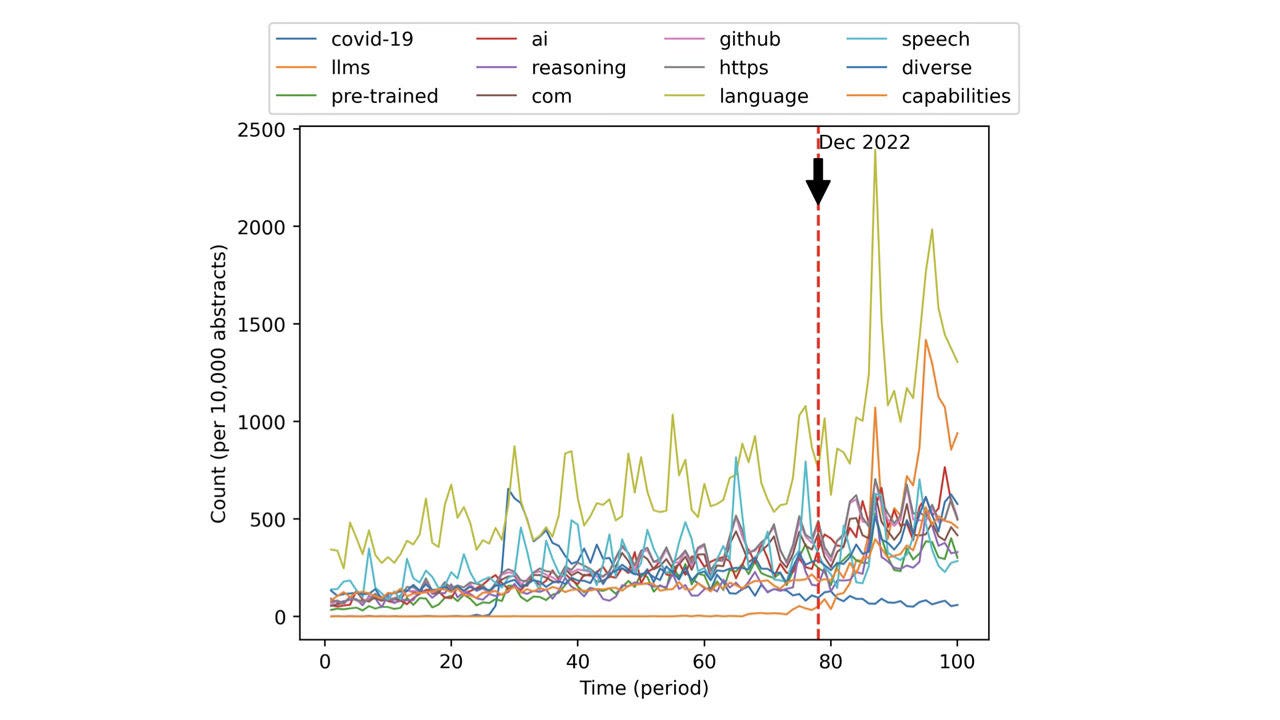

Below is a time series plot showing the usage of 12 key terms in research publications, where a significant rise can be seen since the release of ChatGPT:

In the above plot, the top three keywords that has seen the most notable rise are “language”, “llms”, and “ai”.

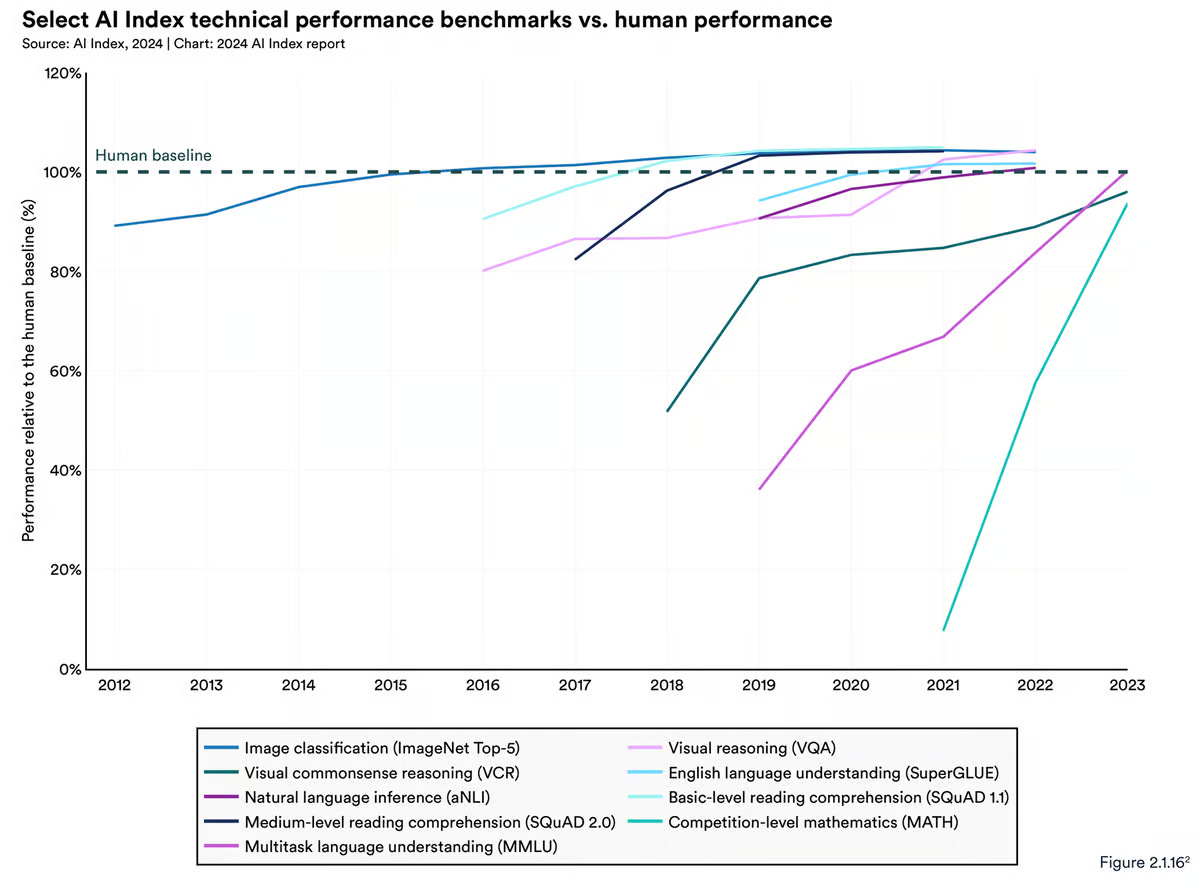

This level of attention to AI from academia is due to the lightning speed that AI is catching up to human performance shown in the plot below:

With this rise in AI and large language model research, AI content detectors are seeing a rise in importance.

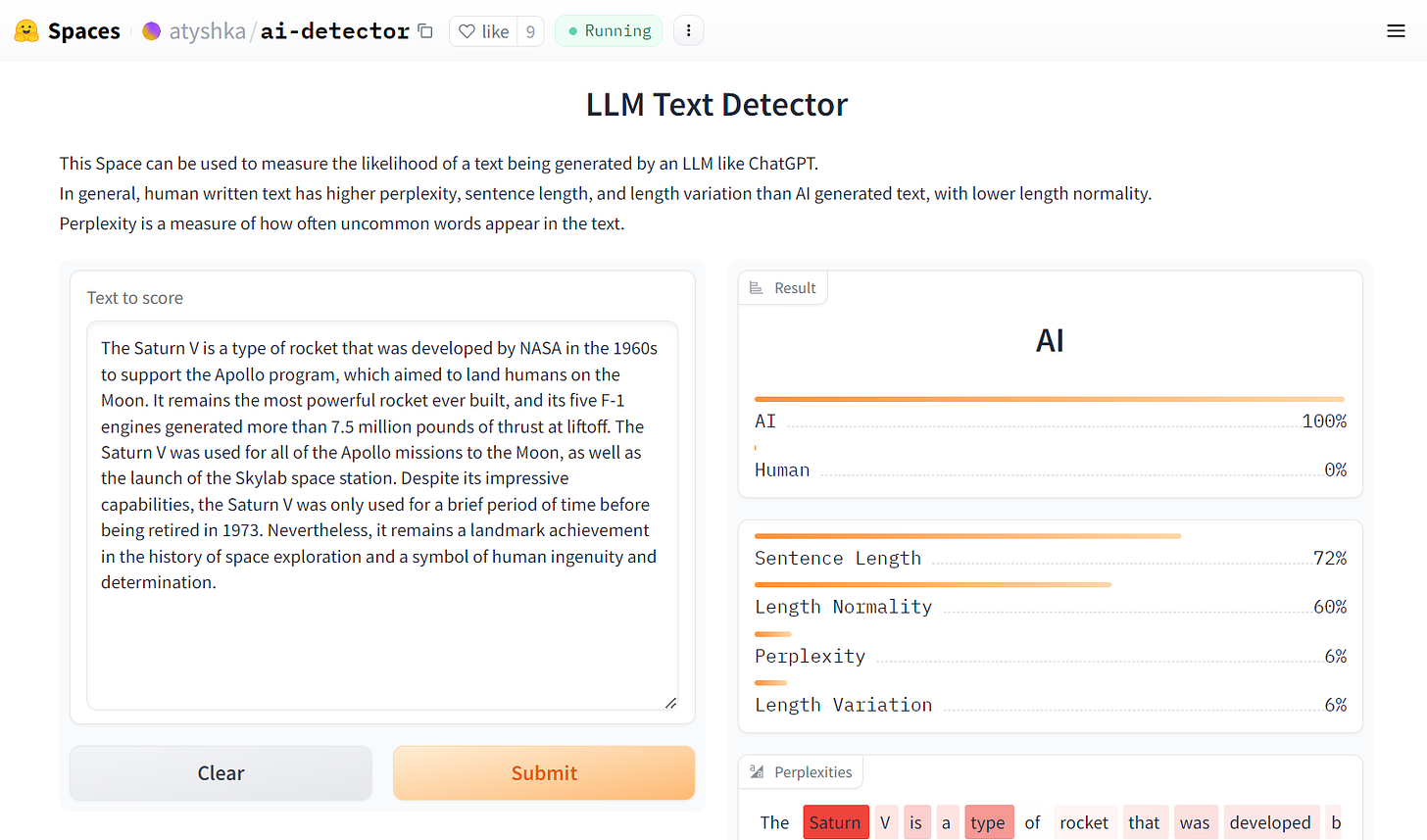

Below is a Large Language Model Text Detector on Hugging Face. It uses metrics including perplexity, sentence length, and length variation to evaluate whether a piece of text is written by human or AI.

YouTube Tutorial

The video that goes through the above in streaming format is below.

The code for this is are as below:

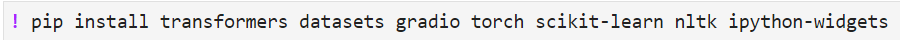

Library Installations

transformers: Provides models for natural language processing

datasets: Strives to provide a large collection of easy-to-use datasets for machine learning and natural language processing

gradio: Enables easy creation of web interfaces for Python functions

torch: Library for deep learning

scikit-learn: Library for machine learning

nltk: Natural Language Toolkit for working with human language data

ipython-widgets: Widgets for Jupyter notebook

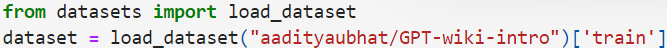

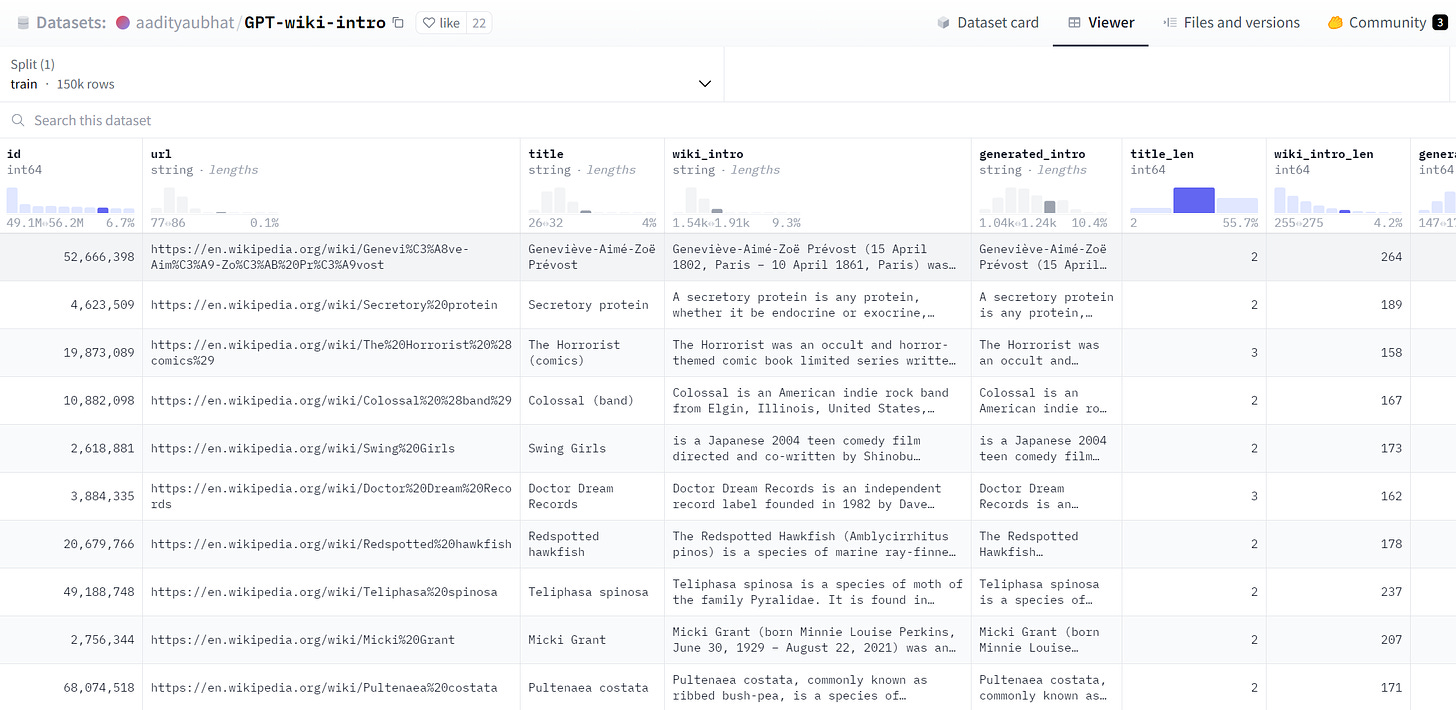

Load Dataset

The above code loads the GPT-wiki-intro dataset from the Hugging Face repository, which contains examples of human generated text in the column [wiki-intro] and GPT-generated text in the column [generated_intro].

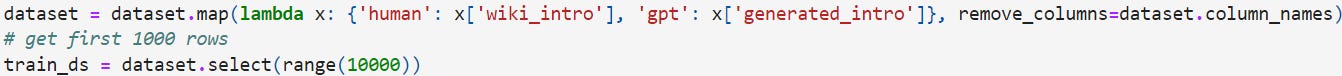

Process Data

With this loaded dataset, all columns except for [wiki-intro] and [generated_intro] are removed and only select the first 10,000 rows for further analysis.

Model Definition - Tokenzier

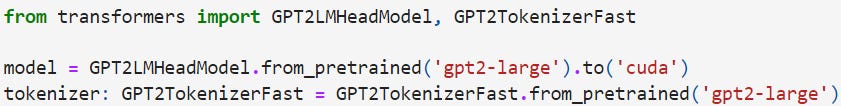

In order to work with this text-based dataset, the below GPT-2 model and its tokenizer need to be set up loaded with pretrained versions and ensuring the model operates on GPU.

Below is an article for an in-depth look into GPT-2 and tokenziers.

Tokenize Text

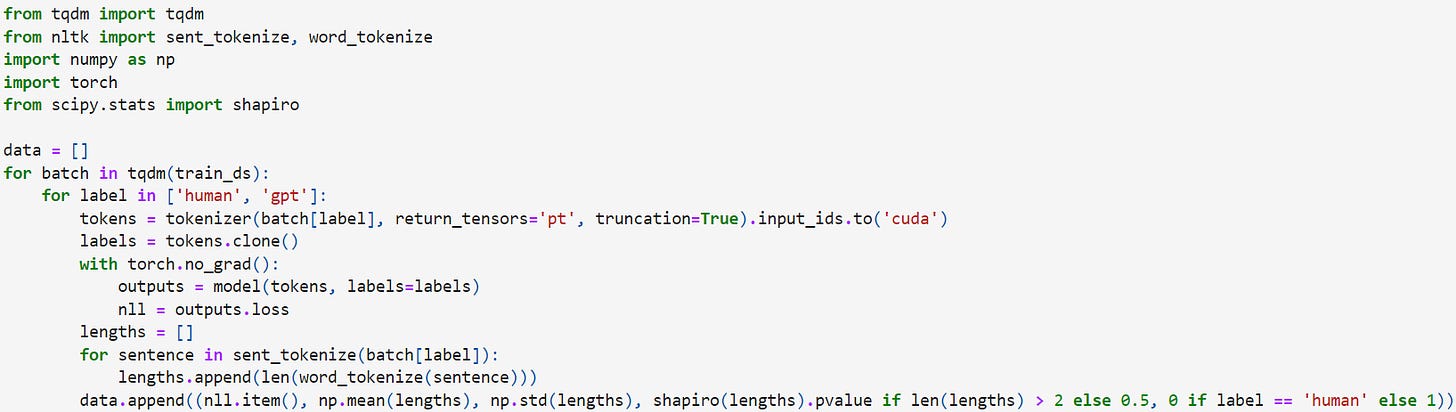

With the GPT-2 model and its tokenizer, the below for loop tokenizes the text, calculates Negative Log Likelihood loss for each entry in the training dataset, and gathers statistics on sentence lengths within each text. These statistics include the mean and standard deviation of sentence lengths, and the Shapiro-Wilk test's p-value for checking normality of sentence lengths. The results are appended to a list, which includes whether the text is human-written or generated by GPT.

Visualize Statistics

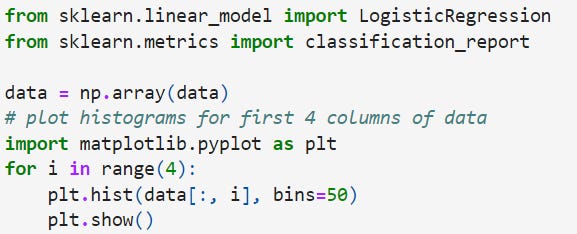

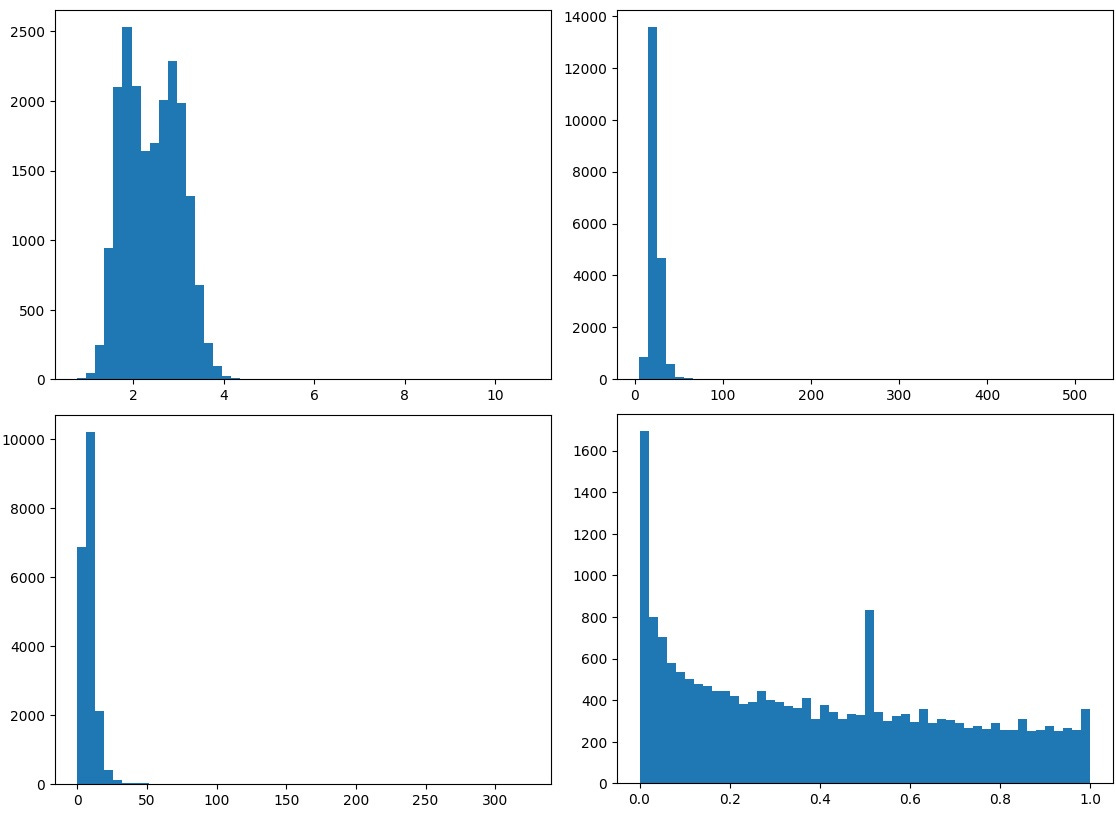

The below code chunk visualizes the Negative Log Likelihood loss, mean and standard deviation of sentence lengths, and the Shapiro-Wilk test's p-value.

Model Definition - Logistic Regression & Neural Network

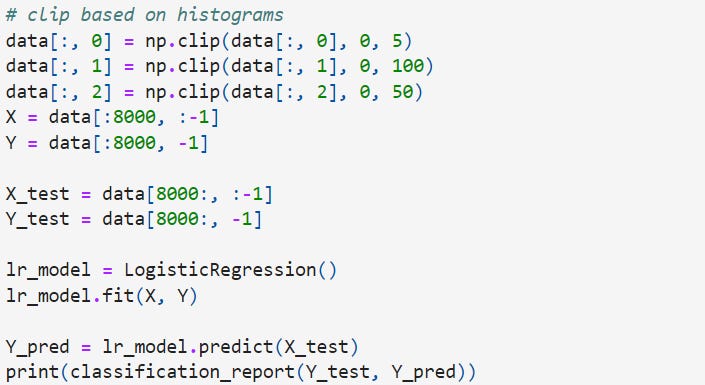

The below logistic regression model is trained to predict whether a text is human or GPT-generated based on the above statistics.

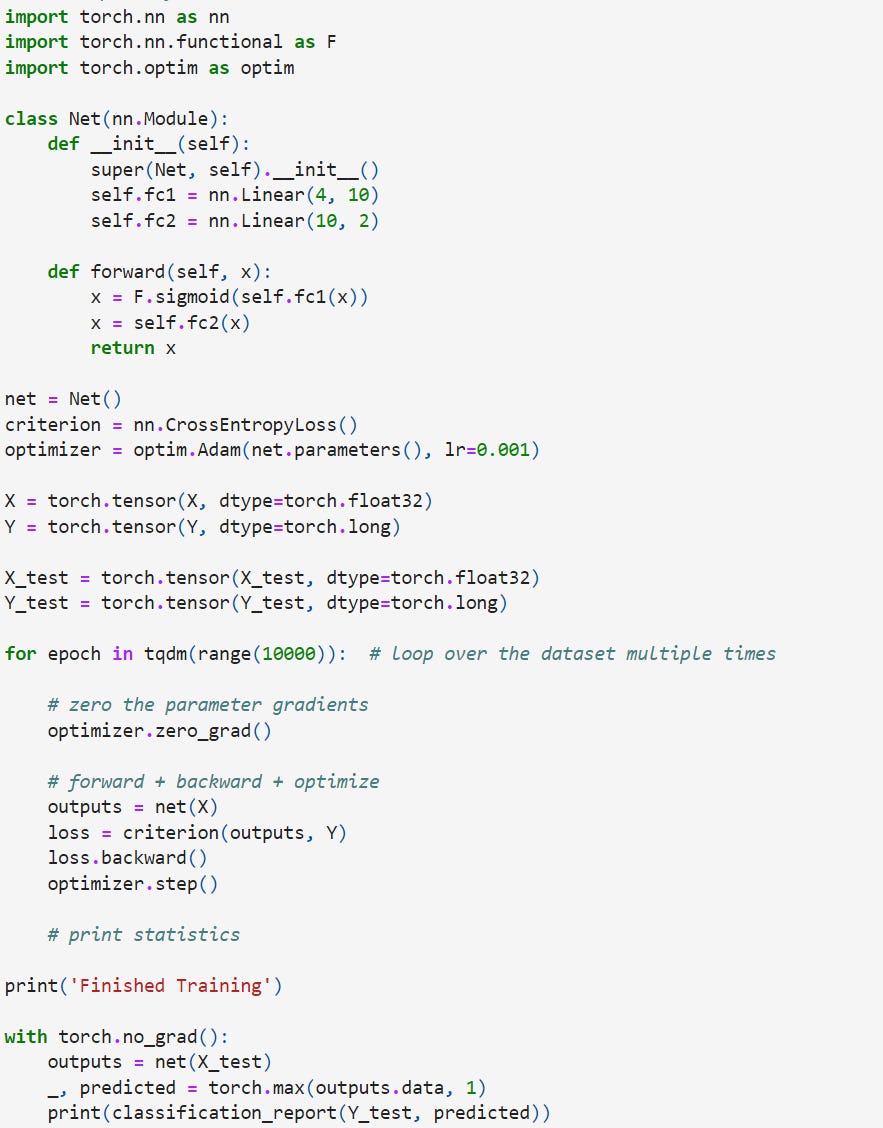

The below code presents a simple feed-forward neural network, which is trained on the same data as the logistic regression model to perform the same classification task, as an alternative model.

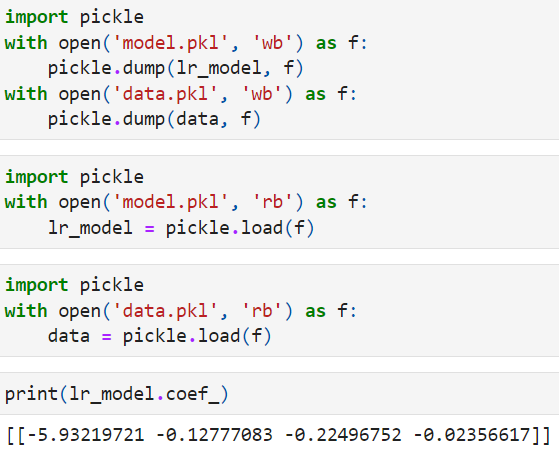

Serialize Logistic Regression Model

The lines of code below with ‘wb’ (write-binary) serializes the Python object of the logistic regression model stored in lr_model as well as data stored in ‘data’ and writes them to the model.pkl file and data.pkl file. This saves all the states of the model and data, including its learned coefficients and configurations, allowing it to be loaded later without any loss of information, The lines of code with ‘rb’ reads the binary data stored in model.pkl and data.pkl files. The ‘pickle-load()’ function is then used to deseralize the content of the model.pkl and data.pkl files back into a Python object. This allows the coefficients of the logistic regression model to be printed.

With the above models saved allows training to be done once, but predictions are performed repeatedly over time.

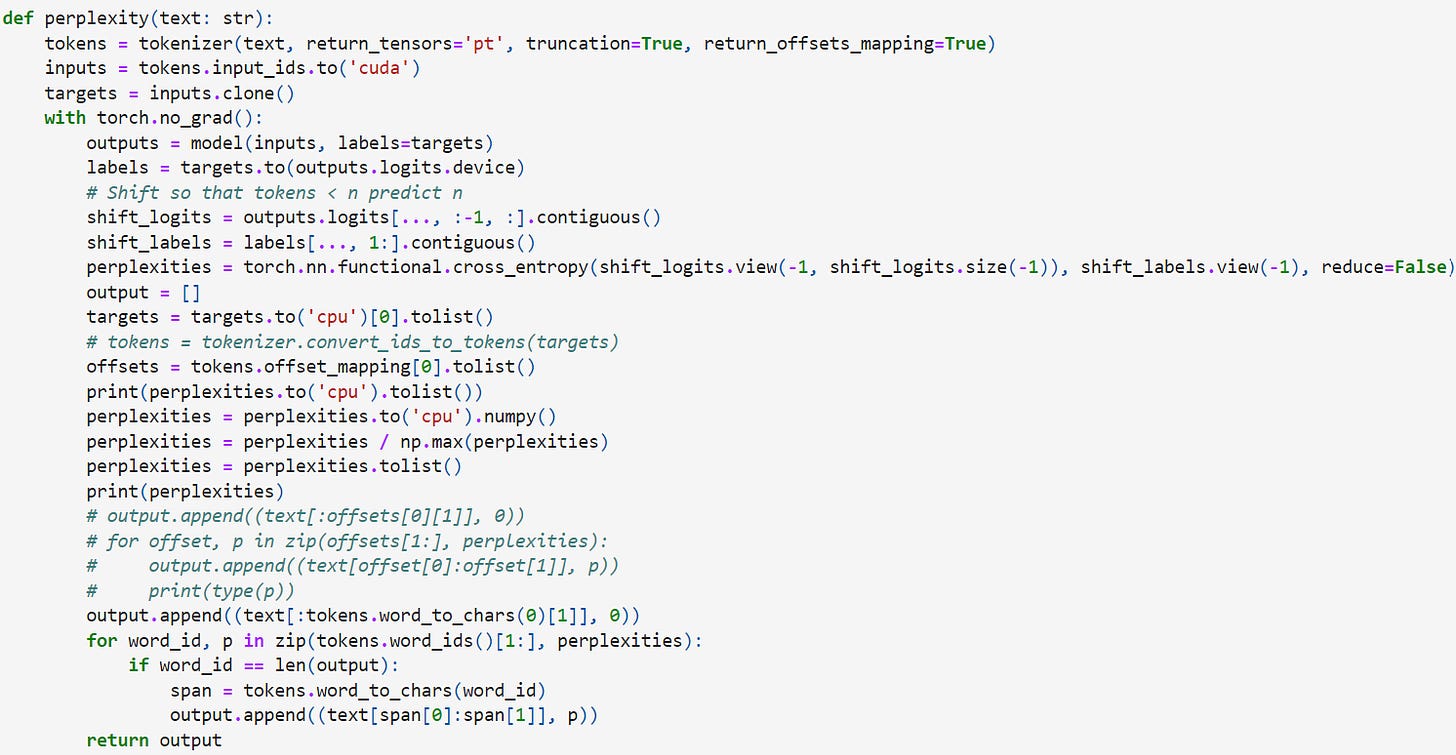

Set Up Training Loop to Calculate Perplexity

The below for loop calculates the perplexity of each word in a given text using a pre-trained GPT-2 model. Perplexity represents the frequency of uncommon words appearing in the text.

Calculate AI Generated Probability with Logistic Regression

The below loop analyzes a given text to determine its language characteristics based on the trained GPT-2 model and returns the probability estimates of the text being human-written or machine-generated using a logistic regression model.

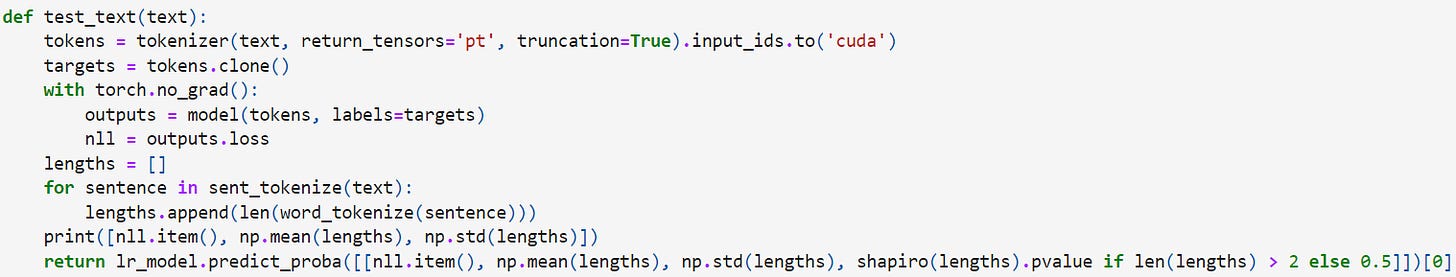

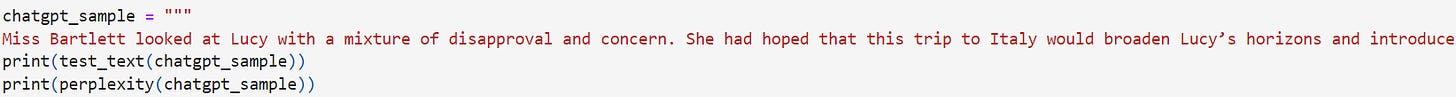

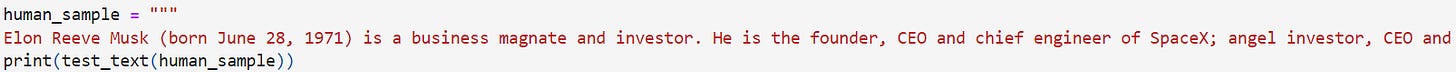

Analyze AI Generated Text Sample

Based on the above analysis, the below code chunk analyzes a text sample generated by ChatGPT.

test_text analyzes the input text to evaluate its linguistic characteristics based on the pre-trained GPT-2 model. It processes the text to calculate its negative log-likelihood loss, average, and standard deviation of sentence length and conducts the Shapiro-Wilk test to evaluate the normality of sentence length distribution. It then uses these calculated statistics to predict probabilities that classify the text as either human-written or AI-generated through logistic regression.

The perplexity function calculates the perplexity of each word in the given text. This quantifies how well the language model predicts each word, reflecting how expected or surprising each word is given the preceding context. The perplexity function outputs a list of tuples, each containing a segment of the text and its associated normalized perplexity value, which helps in understanding which parts of the text are more or less predictable.

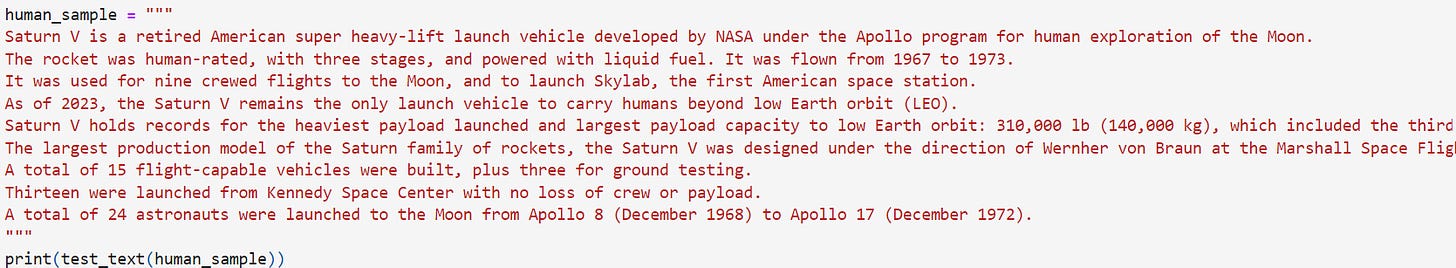

Analyze Human Written Text Sample

Below are the exercise of running the test_text function on two pieces of human written text samples.

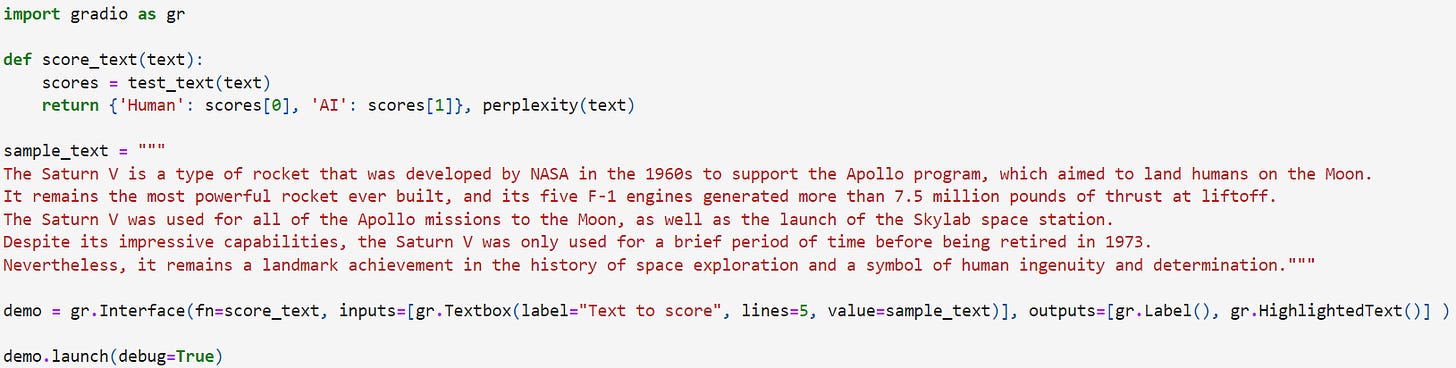

Create Web Interface

As both machine and human generated samples are trained with the test_text and perplexity models, the below code creates a simple web interface using Gradio.

The score_text function takes a string input and processes it using the test_text and perplexity functions defined previously. It then returns two items:

A dictionary with ‘Human’ and ‘AI’ mapped to their respective scores, and

The result from the perplexity function, which would be a list of tuples showing text segments and their perplexity scores

The sample_text serves as the default text in the Gradio interface’s textbox, giving users an example of the kinds of text the app can process.

The gr.Interface function creates the web interface, where:

fn (function) calls the score_text function from above.

‘inputs’ defines the inputs for the interface as a textbox where users can enter or modify text.

‘outputs’ specifies the format of the outputs, where gr.Label() will display the dictionary returned by ‘score_text’ showing scores for Human and AI generated text, and gr.HighlightedText() will display the text with its segments colored or highlighted based on their perplexity.

demo.launch starts the web server and opens the interface in the default web browser, and debug=True enables debug mode providing useful debugging information if errors occur.

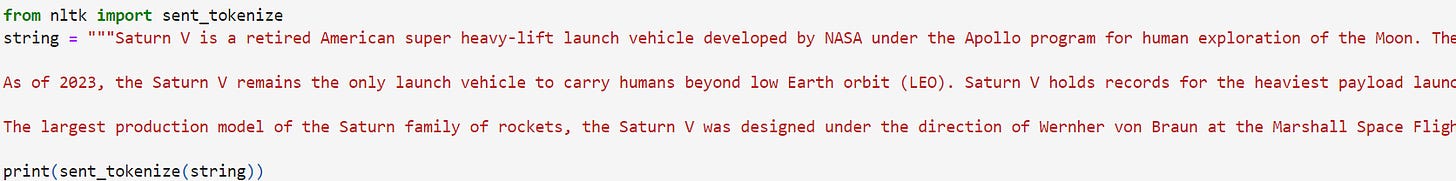

Divide Text into Sentences

The above code divides text into sentences by examining punctuation marks such as periods, question marks, and exclamation points, along with capitalization and spacing.

You Look Like a Thing and I Love You

There are cases in which neural networks have independently arrived at some of the same strategies that neuroscientists have discovered in animal brains.

In 1997, researchers Anthony Bell and Terrence Sejnowski trained a neural network to look at various natural scenes, such as trees, leaves. Without being specifically told to do so, the artificial neural network arrived at some of the same visual processing tricks that animals use.

Google DeepMind researchers discovered that when they built algorithms that were supposed to learn to navigate, they spontaneously developed grid-cell representations that resemble those in some mammal brains.

Even brain surgery works on neural networks, in a manner of speaking. When researchers looked at neurons in an image-generating neural network, they were able to identify individual neurons that generated trees, domes, bricks, and towers.

Udemy Python vs. R course

My Udemy course on learning Python and R side by side is now live for anyone who might be interested in learning these two popular data analytics languages together.