Creating Poetry with AI

Gen AI

In the era of AI, creating poetry is not solely reserved for human poets. AI models can now generate text that resembles the structure of poems. Below is one that was generated by the GPT2 package in python.

GPT stands for Generative Pretrained Transformer and it is a large language model with 1.5 billion parameters. It’s pretrained on a diverse range of internet text and can be fine-tuned with any text data.

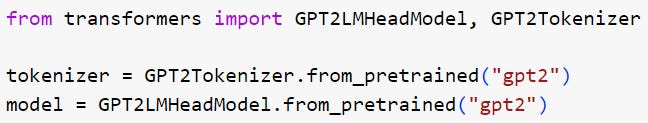

This poetry generating algorithm is coded with the GPT2 head language model as well as the GPT2 tokenizer from the transformers library that’s developed by the company Hugging Face. The GPT2 tokenizer is used to convert the inputted text into tokens, and the GPT2 head language model will generate the poetry.

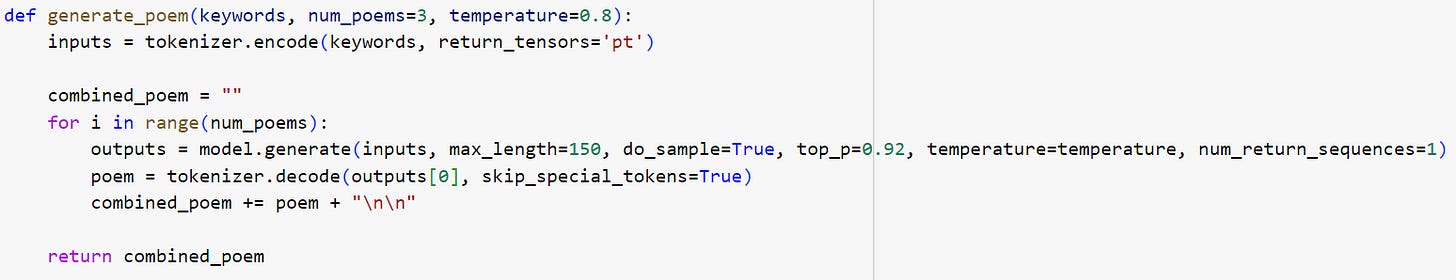

Once we have the model and tokenizer defined, we would be ready to define the poetry generation function.

The line inputs = tokenizer.encode(keywords, return_tensors=’pt’) converts the keyword into a format that the model can understand using the tokenizer’s encode method.

model.generate in the outputs = model.generate line would then generate the poem.

You can see there are several parameters in the model.generate function, including inputs, max_length, do_sample, top_p, temperature, and num_return_sequences.

The temperature parameter controls the randomness of the predictions. A lower temperature results in less random completions and a higher temperature results in more random completions.

The top_p parameter is used for nucleus sampling that helps reduce the randomness of the model’s output by only considering a subset of the total predictions.

The num_poems parameter allows us to control how many poems we want to generate. The generated poems are then concatenated together to form a single combined poem through the line combined_poem += poem + “\n\n”.

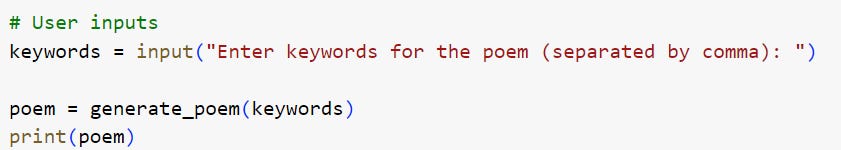

With the above model in place, we can device a user input field for keyword entries and print the poem that’s based on the generate_poem function.

Below is the codebook for the above exercise:

AIQ by Nick Polson and James Scott

What will the future hold? It’s impossible to know, of course, but a few likely trends stand out.

First, language models will become personalized; the machines around you will adapt to the way you speak, just as they adapt to your movie-watching preferences. As a result, they’ll become much better at understanding you. Consider, for example, the historical trajectory of the iPhone. To use the iPhone 6, you had to teach it your thumbprint. To use the iPhone X, you had to teach it your face. It’s not hard to imagine a future iPhone where you first have to read it a bedtime story, to teach it your voice.

Second, good policy and thoughtful regulations will be hugely important if we want to reap the benefits of these new NLP tools, without seeing them put to use in destructive ways. An algorithm that can write an episode of Friends seems cute, if a bit useless. That same algorithm will seem a lot more pernicious when someone can program it to flood the internet with fake news around election time. While we are ultimately optimistic, we are not policy experts, and we don’t know the right answer to this kind of problem. We do know, however, that the problems themselves need to be part of the conversation. In the historical development of every new technology, from fire to gene splicing, there’s always been a moment when “move fast and break stuff” ceased to be a morally tenable position for a grown-up. With computers and language, that moment has arrived.

But even as we recognize the potential downside, let’s not forget the upside, either. If you think that we have clever NLP algorithms and big data sets today, you ain’t seen nothing yet. Think of the hundreds of millions of people out there dictating email to their phones, using Google Translate. or talking to a bot on Facebook or WeChat. Each of these interactions leads to richer models and better performance, since these machines are just piggybacking on the trail of data we leave behind. As they improve -and there are a lot of improvements left to make - we expect these machines to become routine tools in every profession and in every part of life.

CodeChat

CodeChat will continue this month and to be held on June 30 at 5pm EST. The topic is going to be on joiners’ perspectives related to the Generative AI, as well as any questions you may have related to coding. You may sign up here for a meeting reminder or the meeting link is here if you would like to join directly.

Feedback

The Substackers’ message board is a place where you can share your coding journey with me, so that we can exchange ideas and become better together.

Please open the message board and share with me your thoughts!